Beyond Sprints: How Fintech Teams Can Adopt Agent-Driven Development

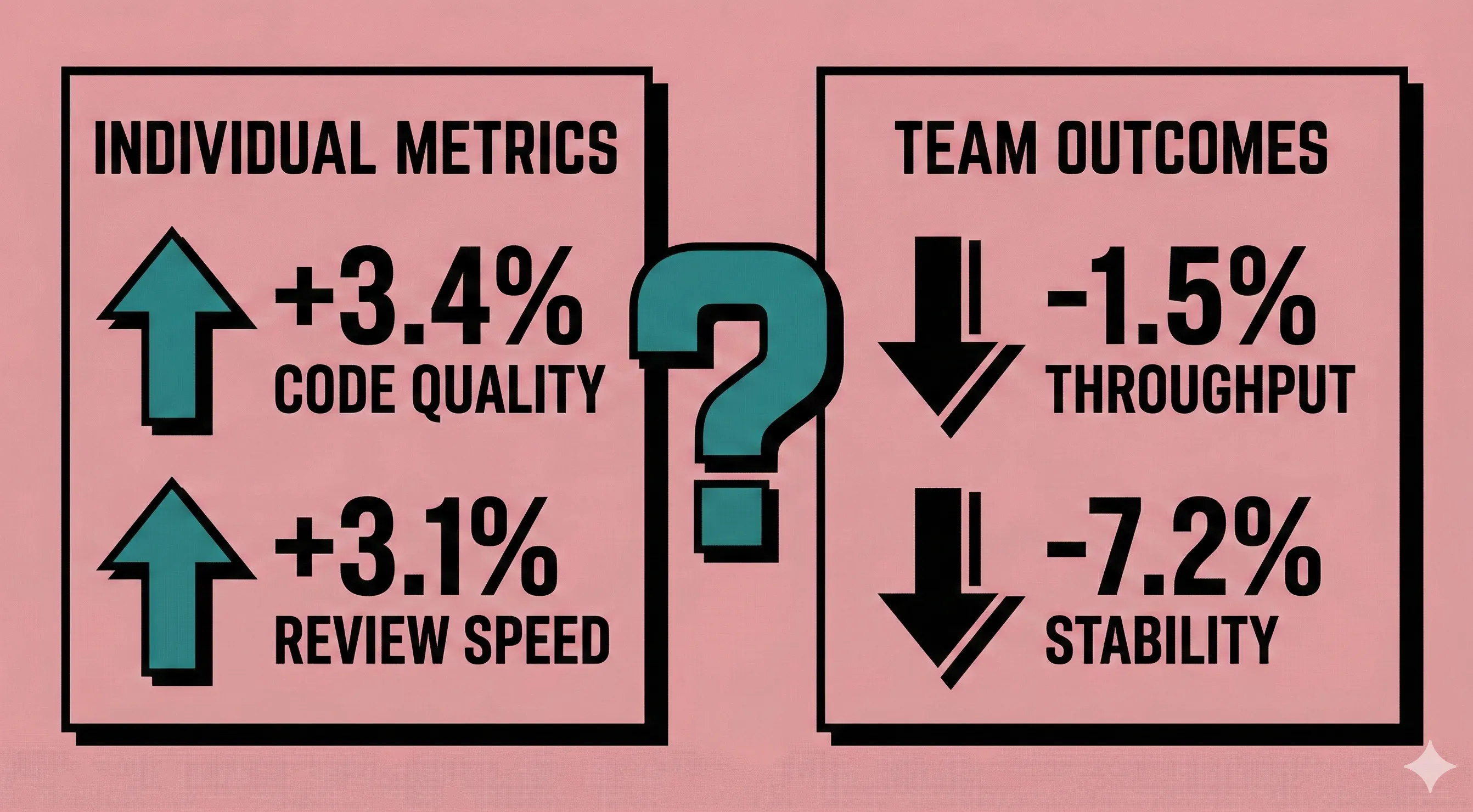

My team started experimenting with AI coding tools about a year ago, and the results have been genuinely confusing. Individual developers feel faster. Code ships sooner. But our overall delivery throughput has not improved the way anyone expected, and our review queues are longer than ever. When DORA's 2024 Accelerate report landed showing a 1.5% throughput drop and 7.2% stability decline across teams using AI tools, it put numbers to something I was already seeing firsthand. The problem is not the tools. The problem is that traditional product development lifecycles were never designed for a world where code generation outpaces design, review queues balloon with AI-sized PRs, and context evaporates between agent sessions. If your fintech engineering org is bolting AI tools onto an unchanged PDLC, you are accelerating inside a system that cannot absorb the speed.

Here is what an agent-driven PDLC actually looks like, why fintech's regulatory constraints make the transition easier (not harder), and how to manage the change without cratering morale.

Why Your PDLC Breaks When AI Shows Up

Traditional agile assumes human-speed iteration. Two-week sprints, daily standups, retrospectives. These ceremonies exist because humans need coordination rhythms to stay aligned. AI coding assistants do not care about your sprint cadence.

When teams adopt tools like Cursor, Claude Code, or GitHub Copilot without adjusting their process, three friction points emerge almost immediately:

Design becomes the bottleneck. Implementation speed now outpaces design capability. Your designers and product managers were already stretched. When developers can scaffold a feature in an afternoon, the backlog of "waiting for specs" grows instead of shrinking.

Review queues explode. Faros AI's research found that teams with high AI adoption merge 98% more pull requests, but PR review time increases 91%. We have felt this directly. In fintech, where code review is a compliance requirement, this is not just annoying. It is a regulatory risk.

Context collapses between sessions. Traditional PDLCs store context in human memory and meetings. AI agents have no persistent memory across sessions. I have been burned by context loss between agent sessions enough times to appreciate what Chris Lema means when he writes: "Intelligence leaves the context window and enters the file system. Context does not need to survive in memory if it is deliberately exported to files."

DORA calls the root cause the "Vacuum Hypothesis": AI enables faster task completion, but the reclaimed time gets absorbed by lower-value work rather than higher-value activities. Your PDLC determines where that time goes. Without restructuring the lifecycle, AI makes you faster at the wrong things.

Agent-Driven Development Is Not Vibe Coding

Andrej Karpathy coined "vibe coding" in early 2025 for casual, prompt-and-pray development. By early 2026, he was already advocating its successor: agentic engineering, where developers spend most of their time orchestrating AI agents rather than writing code directly.

The BMAD Method (Breakthrough Method for Agile AI-Driven Development) is one of the more fully realized implementations of this idea. At 35,000+ GitHub stars, it is the largest open-source framework in this space. But the important thing is not BMAD specifically. It is the pattern it represents.

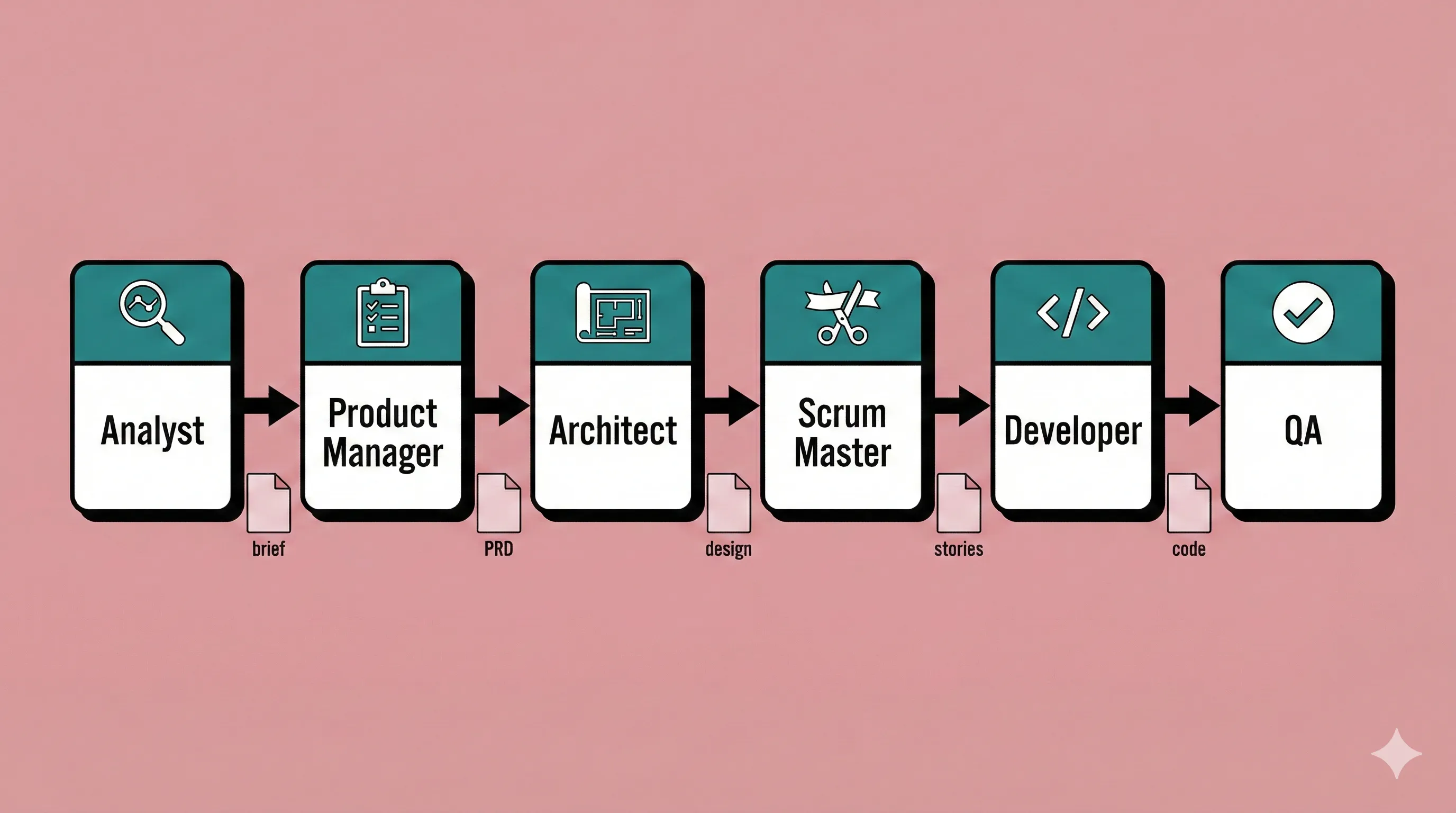

Here is the core shift: instead of AI tools that autocomplete your code, you get AI agents that fill roles in your development lifecycle. BMAD defines specialized agents (Analyst, Product Manager, Architect, Scrum Master, Developer, QA), each with explicit constraints, inputs, and outputs.

A typical flow looks like this:

Each agent produces a versioned document. The PM agent outputs a PRD. The Architect agent outputs a technical design referencing that PRD. The Scrum Master "shards" the design into atomic story files, each containing just enough context for a developer agent to implement without loading the entire project.

The key insight is where context lives. In traditional AI-assisted development, context lives in conversation history and disappears when the session ends. In agent-driven development, context lives in versioned files in your repo. Every decision, requirement, and constraint is encoded in documents that any agent (or human) can read.

BMAD's sharding mechanism claims 90% token savings when loading story context compared to loading full project documentation. That matters for cost, but it matters more for accuracy. Smaller, focused context windows produce fewer hallucinations.

Other frameworks share this philosophy. GitHub's Spec Kit takes a lighter approach with four phases (Specify, Plan, Tasks, Implement) that work with any AI tool. AWS Kiro generates specification files from natural language. The common thread: specifications become the source of truth, not code.

The Scott Logic team benchmarked spec-driven development against iterative prompting and found it roughly 10x slower for small projects: over 2,500 lines of planning markdown for a few hundred lines of code. That overhead is real. But their own conclusion was telling: the structured approach "might suit teams lacking deep technical expertise better than experienced architects." For a 100-person fintech org where not every developer is a senior architect, that tradeoff looks different than it does for a solo developer.

Fintech Compliance Actually Helps Here

Here is the counterintuitive part: fintech's regulatory burden makes agent-driven development more viable, not less.

PCI DSS 4.0.1 already requires what BMAD provides. As of March 2025, PCI DSS mandates security testing throughout development (not just before release), code review for security impact before merging, and SAST execution on every pull request. The PCI Security Standards Council explicitly requires human-in-the-loop oversight when AI is used in payment environments. AI must be treated as a "potential malicious insider" during threat analysis.

These sound like constraints. They are actually a forcing function for doing agent-driven development properly:

- Human-in-the-loop? That is the orchestrator model. The developer reviews and approves agent output at each stage rather than rubber-stamping raw AI code.

- Audit trails? Every BMAD artifact is versioned in Git. The progression from product brief to PRD to architecture doc to story to implementation is a traceable chain an auditor can follow.

- Security scanning on every PR? Agent-driven workflows already gate on CI/CD checks. Adding SAST, DAST, and SCA to the pipeline is a configuration change, not a process change.

SOC 2 compliance maps cleanly to agent workflows. SOC 2 now requires accounting for AI-specific risks across all five trust service criteria. The key requirements (access controls to LLMs, monitoring for unintended use, SDLC controls for AI systems, vendor controls for AI tool providers) are addressed by a structured agent framework more naturally than by ad hoc tool usage.

BMAD's Enterprise Track adds a Security Auditor agent for threat modeling, code review gates with static analysis and coverage requirements, and change record linkage between code modifications and PRD changes. This creates what amounts to a continuous compliance ledger.

The practical implication: retrofitting compliance onto unstructured AI usage is expensive and fragile. Building compliance into the agent workflow from day one is cheaper. Organizations that embed compliance into system architecture from the outset scale faster than those relying on manual reviews.

What a Phased Rollout Looks Like

Plaid achieved over 75% of their engineers regularly using AI coding tools within six months. As someone running a similar transition in fintech, their approach is the closest public case study to what I am working through.

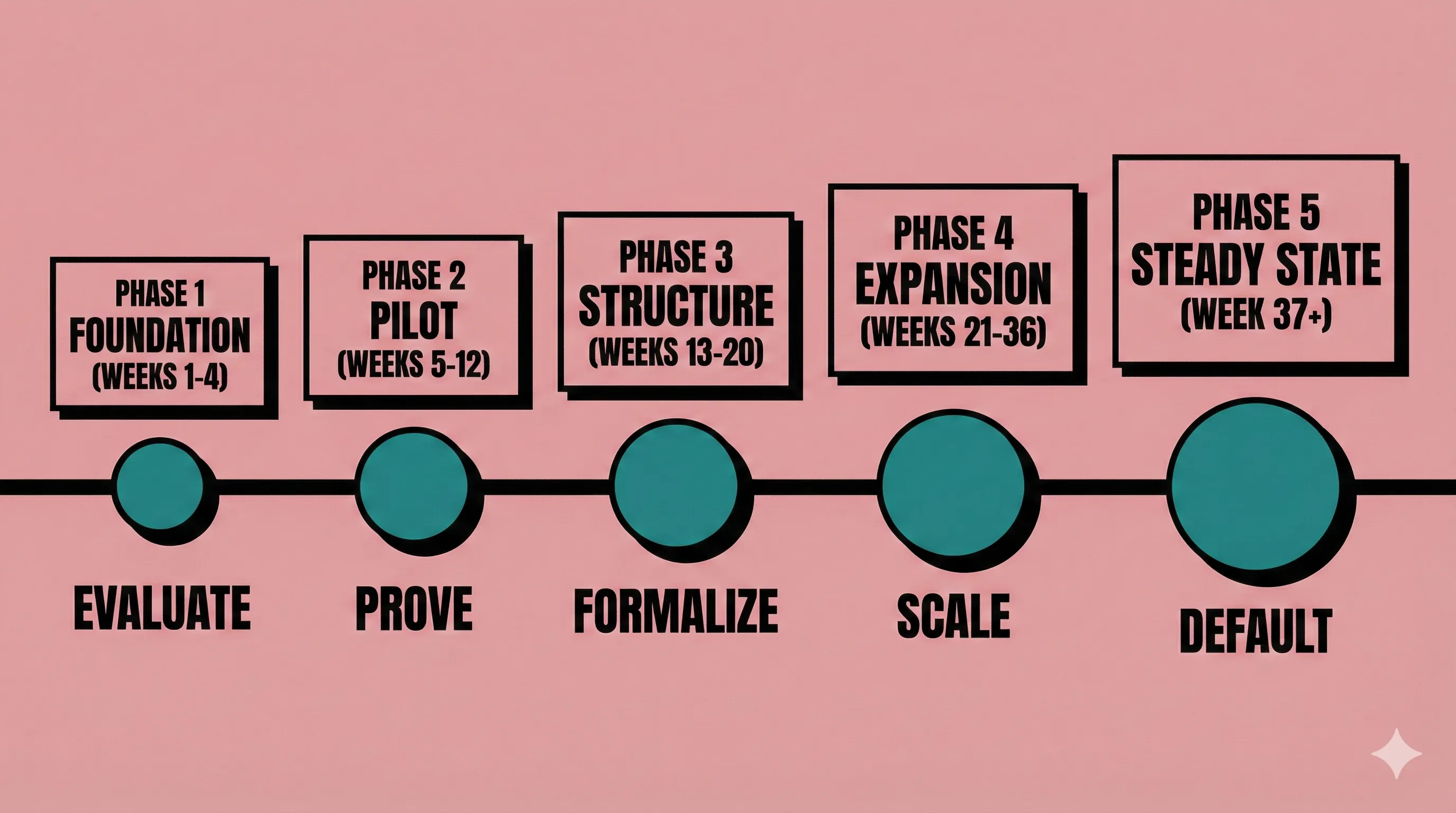

Here is the phased rollout I have been building from Plaid's experience, Faros AI's scaling framework, and DORA's adoption research, adapted for a 50-200 person fintech engineering org:

Phase 1: Foundation (Weeks 1-4)

Goal: Compliance-first tool evaluation.

Before anyone writes a prompt, your legal and compliance teams need a classification framework. Plaid built one based on inputs (what data is sent, where it goes) and outputs (what the tool returns, where it is stored).

Evaluate candidate tools against PCI DSS 4.0.1 and SOC 2 requirements. GitHub Copilot Enterprise has PCI-DSS v4.0 attestation. Amazon Q Developer inherits 143 AWS compliance certifications. Augment Code holds SOC 2 Type II and ISO/IEC 42001. Not every tool will pass. Some popular AI coding assistants lack documented SOC 2 certification.

Establish data classification for what code and data can be sent to which tools. Payment-processing code has different rules than marketing site code.

Phase 2: Controlled Pilot (Weeks 5-12)

Goal: Prove the workflow on low-risk code.

Select 2-3 teams for controlled adoption. Target teams working on non-payment-critical codebases: internal tooling, documentation systems, developer productivity infrastructure.

Plaid found that the most successful teams "often had engineering managers who were already excited about AI." Target those EMs first.

Deploy measurement infrastructure from day one. Plaid built an internal dashboard tracking usage, retention by team cohorts, and satisfaction. When users churned, team members personally reached out to understand why.

Run the pilot with a structured method. Start with BMAD's Quick Flow (codebase analysis, implementation, code review) before introducing the full planning path. Let teams experience the constraint-based approach on real work.

Phase 3: Structured Method Introduction (Weeks 13-20)

Goal: Move from "AI-assisted coding" to "agent-driven development."

Introduce the full agent workflow on pilot teams. Map your existing PDLC ceremonies to agent roles:

- Sprint planning becomes story sharding, where the Scrum Master agent breaks architecture docs into atomic, context-rich story files

- Code review becomes multi-layer validation combining automated security scanning, QA agent review, and human approval

- Retrospectives add a standing question: "How is AI affecting our work?" Scrum.org identifies the absence of this reflection as a common anti-pattern

Integrate agent quality gates into CI/CD. Every PR from an agent-driven workflow runs SAST (PCI DSS 6.3.2), coverage checks, and architectural lint rules.

Phase 4: Measured Expansion (Weeks 21-36)

Goal: Scale to the broader org.

Follow the expansion sequence: replicate to similar teams first, then adapt for specialized teams, then address holdout teams with customized support.

Plaid encouraged "dual wielding," letting engineers use new AI tools alongside their existing IDE rather than forcing replacement. Teams can operate under the structured agent workflow for new features while maintaining existing processes for maintenance work, converging over time.

Success criteria per expansion wave (from Faros AI): 60%+ daily active usage, quality metrics preserved or improved, 30+ minutes saved per developer daily, developer NPS above 30.

Phase 5: Steady State (Week 37+)

Goal: Agent-driven development is the default.

The structured workflow is now the standard PDLC. Quarterly compliance reviews verify audit trail completeness. Model version tracking ensures you can tell auditors exactly which AI version generated code in any given release.

Change Management That Does Not Tank Morale

Here is the tension I am navigating, and you probably are too: the 2025 Stack Overflow Developer Survey found that 84% of developers use or plan to use AI tools, but only 29% trust AI accuracy, down from 40% the previous year. Adoption is up. Trust is down. That gap is where morale problems live.

A study by METR made this personal for me: 16 experienced open-source developers completed 246 tasks. When using AI tools, they were actually 19% slower, but predicted beforehand that AI would make them 24% faster. After the study, they still believed AI had sped them up by 20%. I have caught myself in this same trap. You cannot rely on self-reported productivity to guide this transition. Measure outcomes directly.

What the Data Says Works

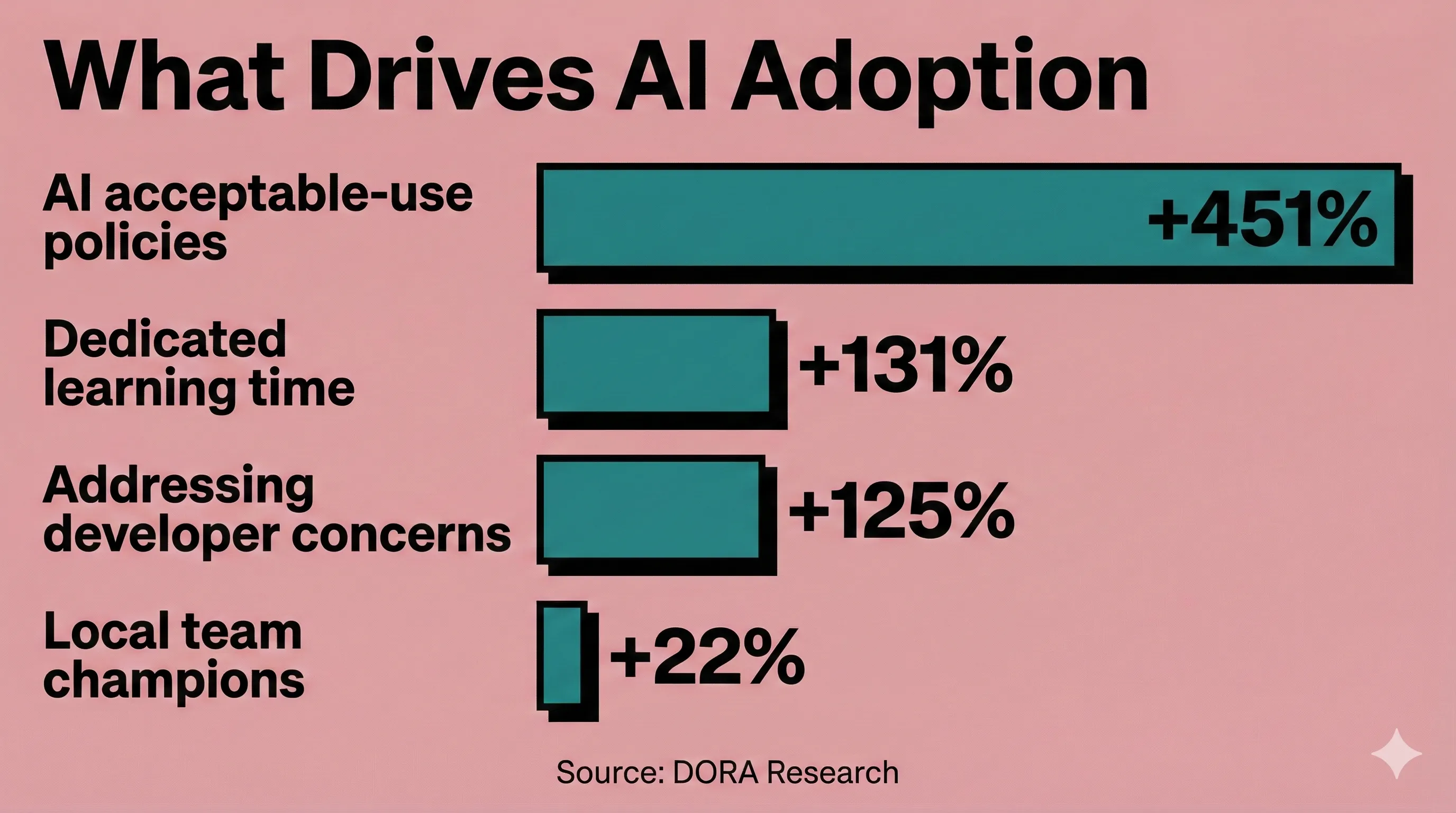

Dedicated learning time produces 131% more adoption than mandates. DORA's research is unambiguous: reducing productivity expectations during the adoption phase and providing collaborative learning formats (hackathons, communities of practice) dramatically outperforms mandatory training.

Addressing developer concerns yields 125% more adoption than ignoring them. Name the fears out loud. Scrum.org's analysis of 166 AI transformation anti-patterns found that when leadership avoids acknowledging job displacement concerns, resistance goes underground. People disengage or quietly sabotage.

Champions programs are the single most recommended tactic across every source. DORA found that developers are 7x more likely to be daily AI users when leaders actively promote tools. Teams with local champions (respected, experienced engineers, not just enthusiastic juniors) see 22% greater adoption. Allocate 10-15% dedicated time for champion activities.

Organizations with clear AI acceptable-use policies see 451% more adoption than those without. Paradoxically, policies may cause a short-term dip as developers exercise more judicious decision-making, but that is a sign of responsible adoption, not resistance.

What the Data Says Fails

Banking productivity savings before learning is complete. Klarna replaced 700 customer service workers with AI, quality declined, customer satisfaction dropped, and their CEO publicly admitted: "We focused too much on efficiency and cost. The result was lower quality." They are now rehiring. Forrester reports that 55% of employers regret laying off workers for AI.

Measuring productivity too early. MIT research documents that AI-adopting firms outperform peers, but only after navigating an initial productivity dip. Premature measurement ignores ramp-up time, the need for oversight, and new tasks like prompt engineering and output verification.

Public leaderboards and individual pressure. Pressuring individual developers through usage leaderboards leads to disengagement and mistrust. Monitor usage across groups, not individuals.

The "License-and-Hope" anti-pattern. Buying AI tools and hoping people adopt them without changing incentives or processes. Measuring success by login rates rather than outcomes.

Frame It as Career Growth

I have started framing AI adoption as career growth in one-on-ones with my team, and the data supports the approach. The BairesDev Dev Barometer reports that 37% of developers say AI has already expanded their career opportunities, and 65% expect their role to be redefined in 2026, moving from routine coding toward architecture, integration, and AI-enabled decision-making.

The framing matters. Stop discussing AI as a replacement and position it as an amplifier. Addy Osmani calls it the "force multiplier" model: AI handles boilerplate, initial test cases, and pattern implementation. Developers move toward architecture, security review, and complex problem-solving. That work was always more valuable but got crowded out by implementation grind.

Design skills now surpass coding as the most in-demand competency for AI-specific roles. Communication, leadership, and collaboration are in the top 10. The developers who learn to orchestrate agents effectively are not becoming obsolete. They are becoming more valuable.

What Comes Next

Scrum.org's analysis of 166 AI transformation anti-patterns found that 65% are organizational failures: governance, roles, process, culture. Only 22% are technical failures. The technology works. The organization has to catch up.

The shift from sprint boards to agent orchestration is not a tooling decision. It is a PDLC redesign that happens to use AI as the execution layer. For fintech teams, the regulatory constraints you already operate under (audit trails, human oversight, security gates) are not obstacles to this transition. They are the scaffolding.

Start with one team, one structured workflow, one quarter of patience. Measure outcomes, not activity. Name the fears your team will not say out loud. And resist the urge to announce productivity gains before anyone has had time to learn.